WatchGuardian: Enabling User-Defined Personalized Just-in-Time Intervention on Smartwatch

Abstract

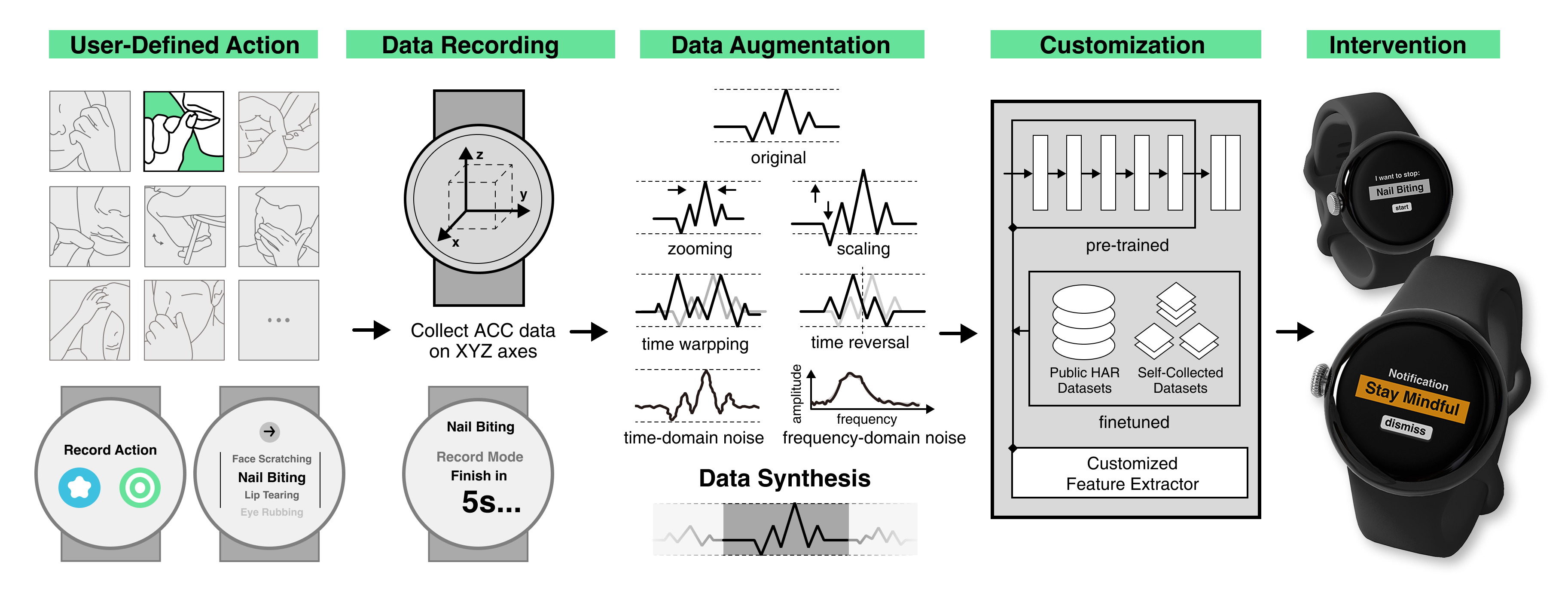

While just-in-time interventions (JITIs) have effectively targeted common health behaviors, individuals often have unique needs to intervene in personal undesirable actions that can negatively affect physical, mental, and social well-being. We present WatchGuardian, a smartwatch-based JITI system that empowers users to define custom interventions for personal actions with few samples. To detect new actions from limited data, we developed a few-shot learning pipeline that finetuned a pre-trained inertial measurement unit (IMU) model on public hand-gesture datasets. We then designed a data augmentation and synthesis process to train additional classification layers for customization. Our offline evaluation with 26 participants showed that with three, five, and ten examples, our approach achieved an accuracy of 76.8%, 84.7%, and 87.7%, and an F1 score of 74.8%, 84.2%, and 87.3%. We then conducted a four-hour intervention study to compare WatchGuardian against a rule-based intervention. Our results demonstrated that our system led to a significant reduction by 64.0±22.6% in undesirable actions, substantially outperforming the baseline by 29.0%. Our findings underscore the effectiveness of a customizable, AI-driven JITI system for individuals in need of behavioral intervention in personal undesirable actions. We envision that our work can inspire broader applications of user-defined personalized intervention with advanced AI solutions.

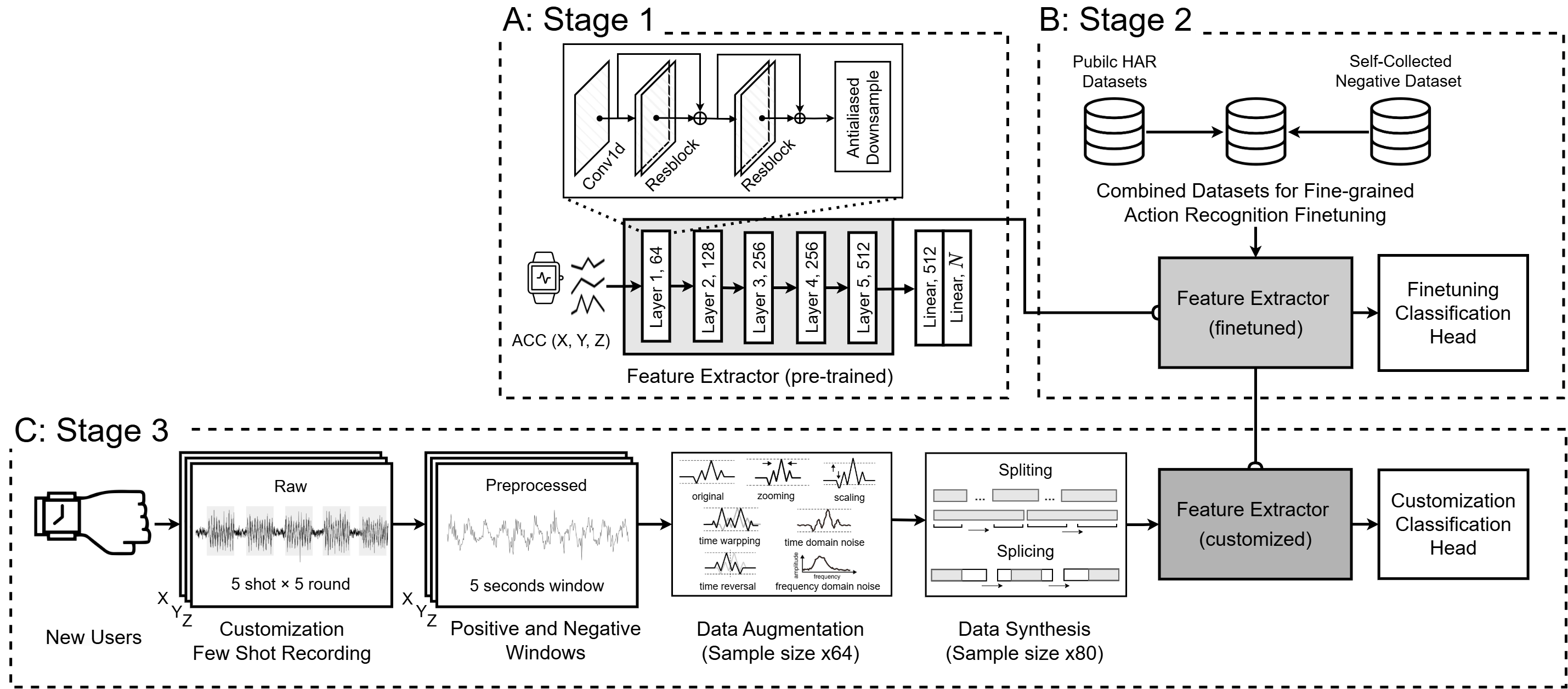

Few-shot Learning Pipeline

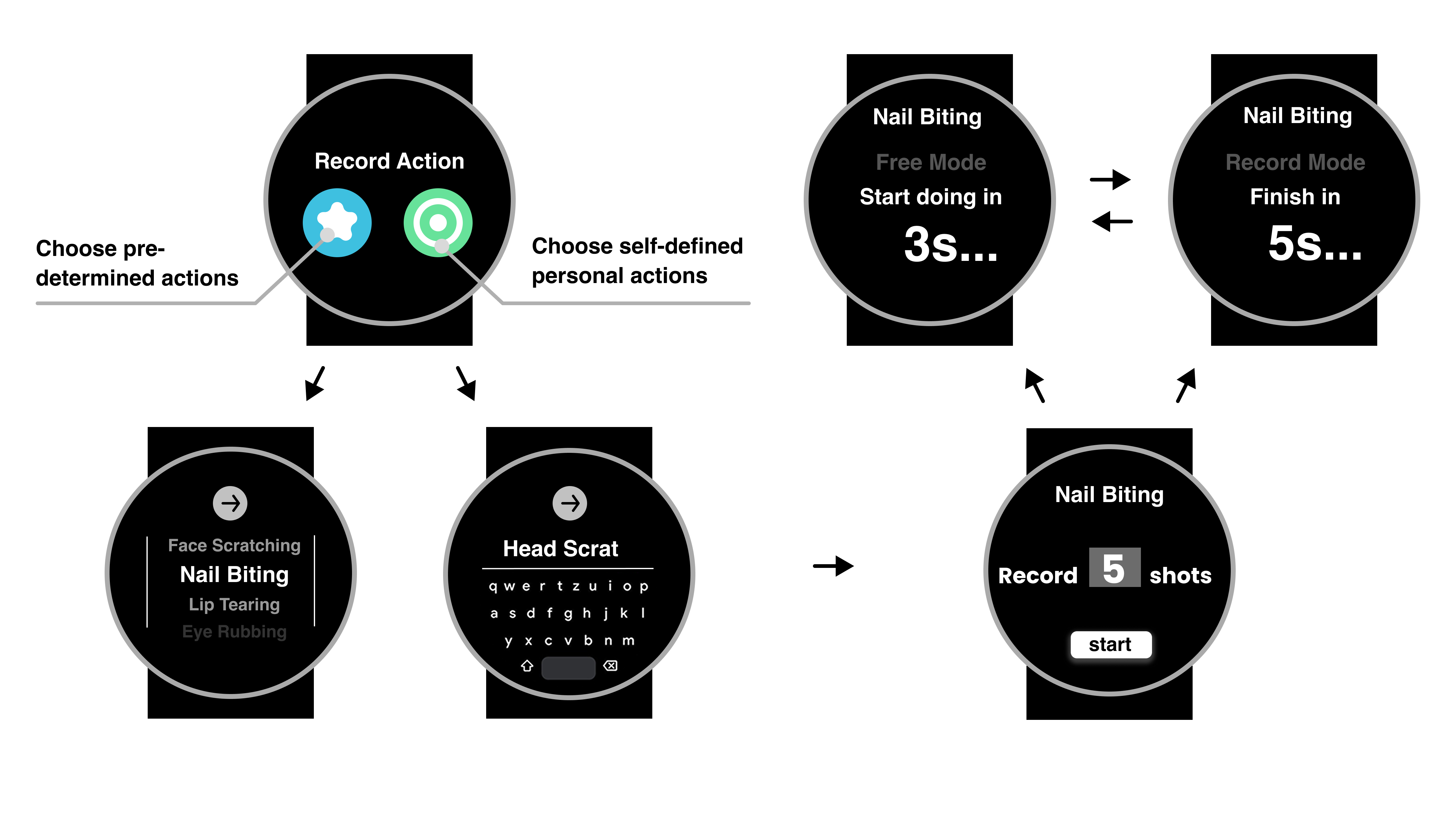

Smartwatch Interface Designs

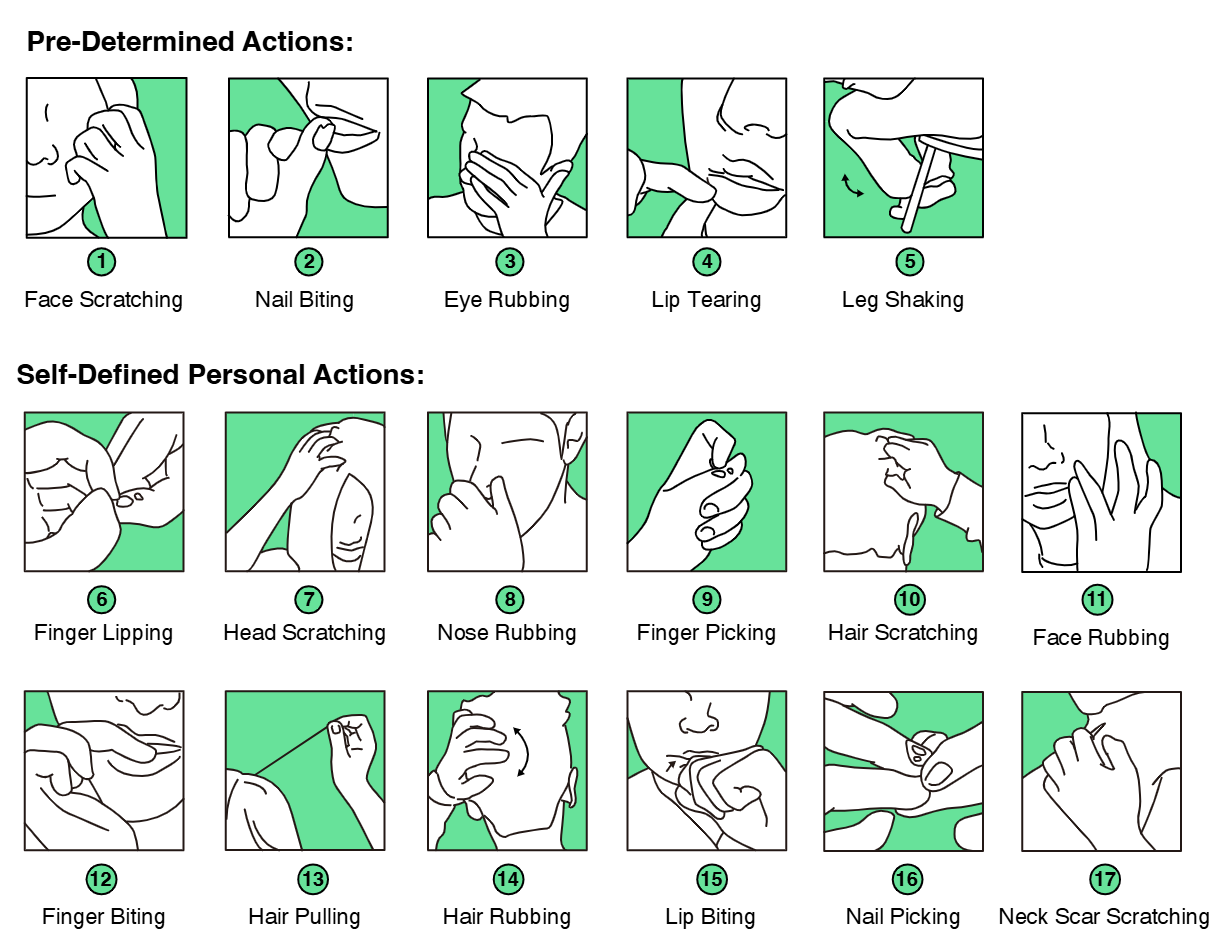

Target Actions for Evaluation

Offline Performance Evaluation

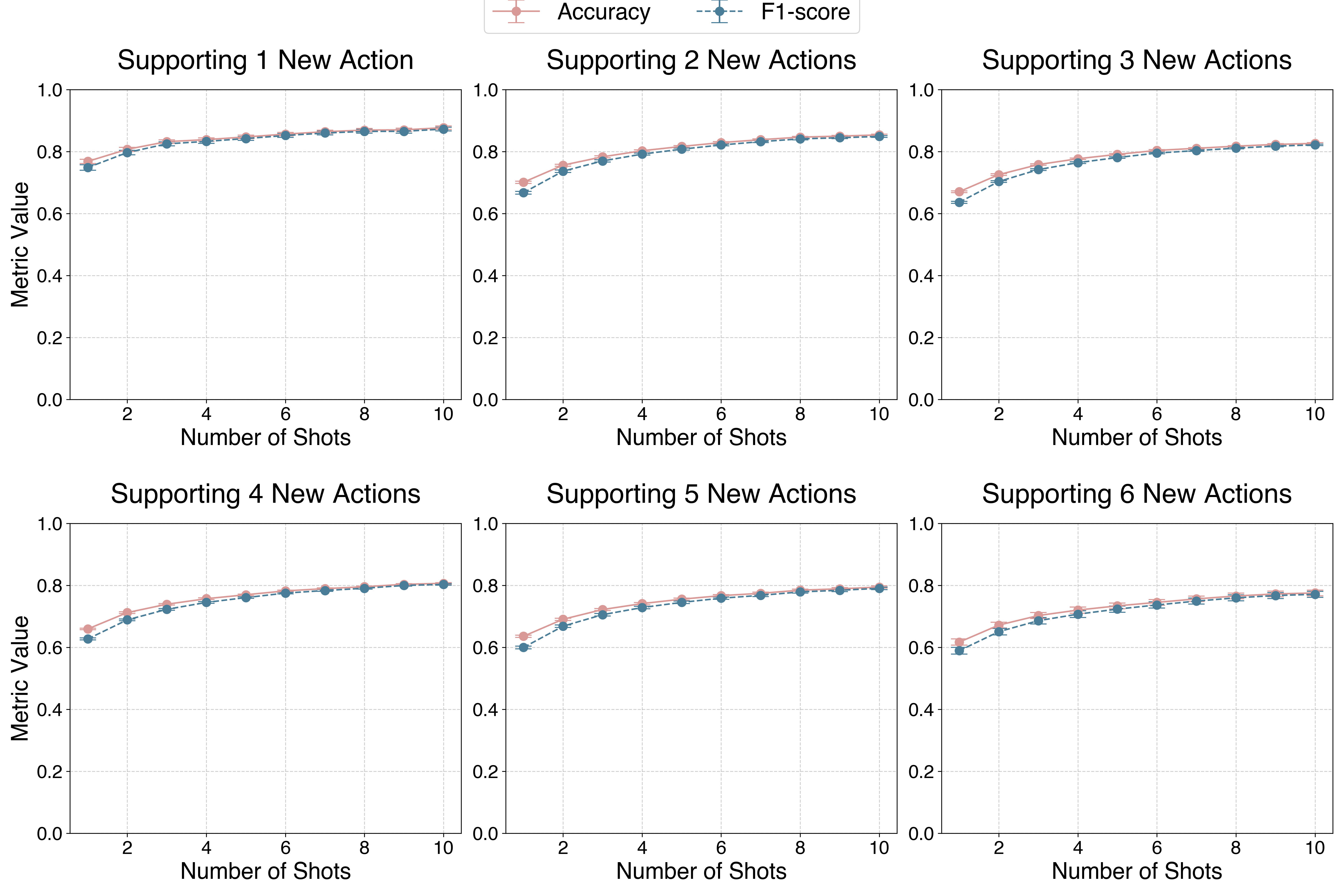

Few-shot Learning Pipeline Performance of Accuracy and F1 Score. We experimented with different numbers of shots using 1 to 10 samples to train a custom model. We also experimented with adding more than one target action simultaneously (i.e., multi-class classification). Error bars indicate standard error. The same below.

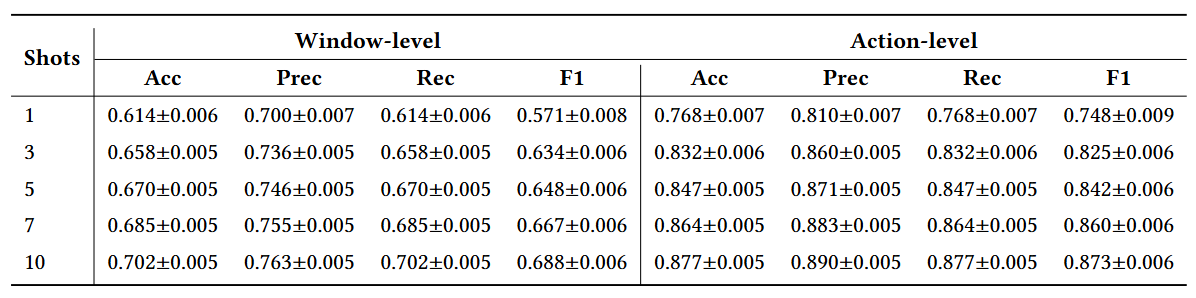

Detailed Few-shot Pipeline Performance with Different Numbers of Shots when Adding One Personal Action. Window-level results are based on each sliding window as a data point. Action-level results are the aggregation of the sliding windows after smoothing post-processing (threshold=3) and are closer to real-life application scenarios.

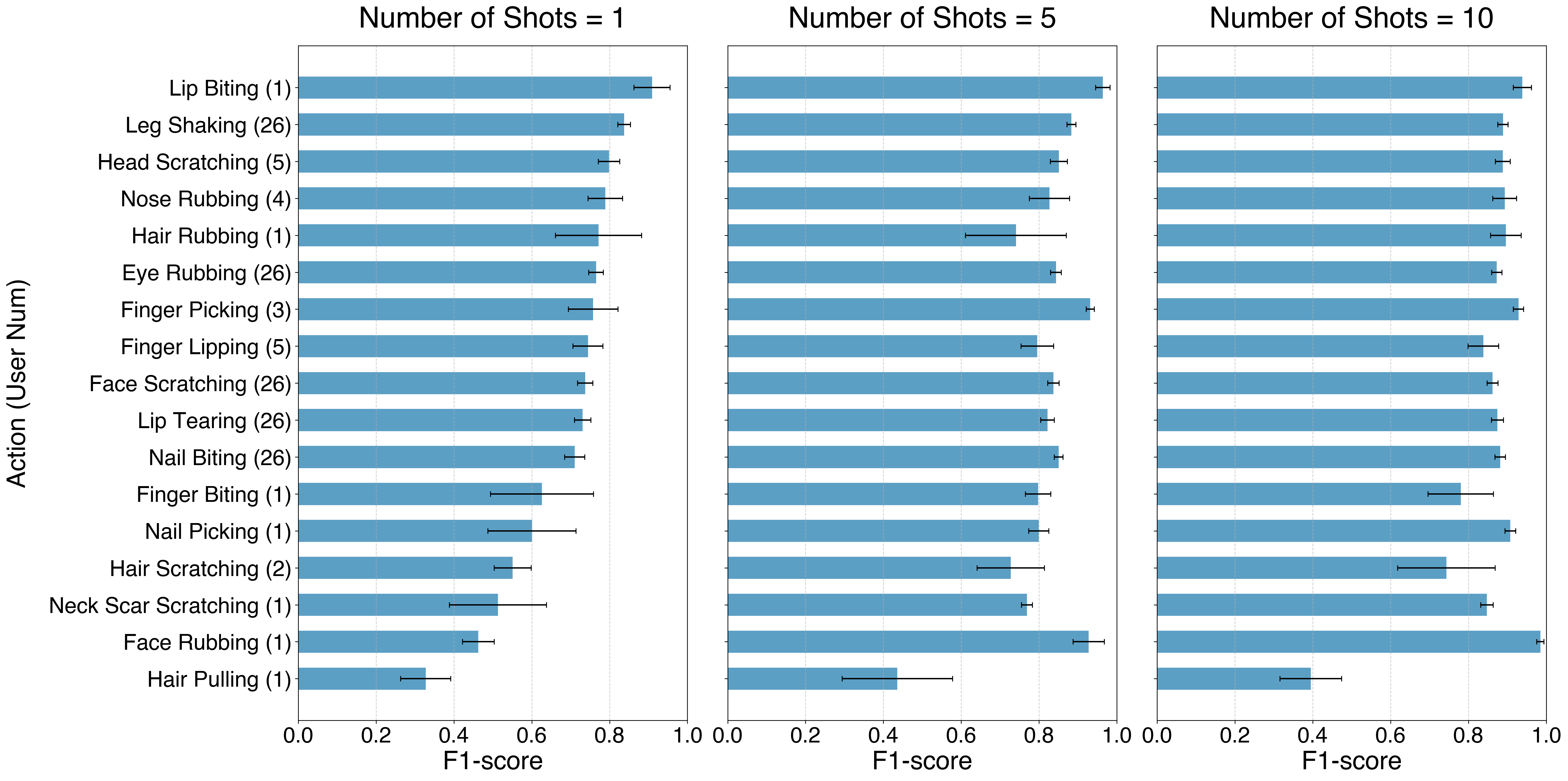

Model Performance of Recognizing Each Action with 1, 5, or 10 Shots. For consistency, each action was added alone (i.e., binary classification model). The “(User Num)” indicates how many users did this action. The five pre-determined actions (Lip Tearing, Nail Biting, Face Scratching, Eye Rubbing, and Leg Shaking) have the total number of participants (26), and other self-defined actions are more scattered.

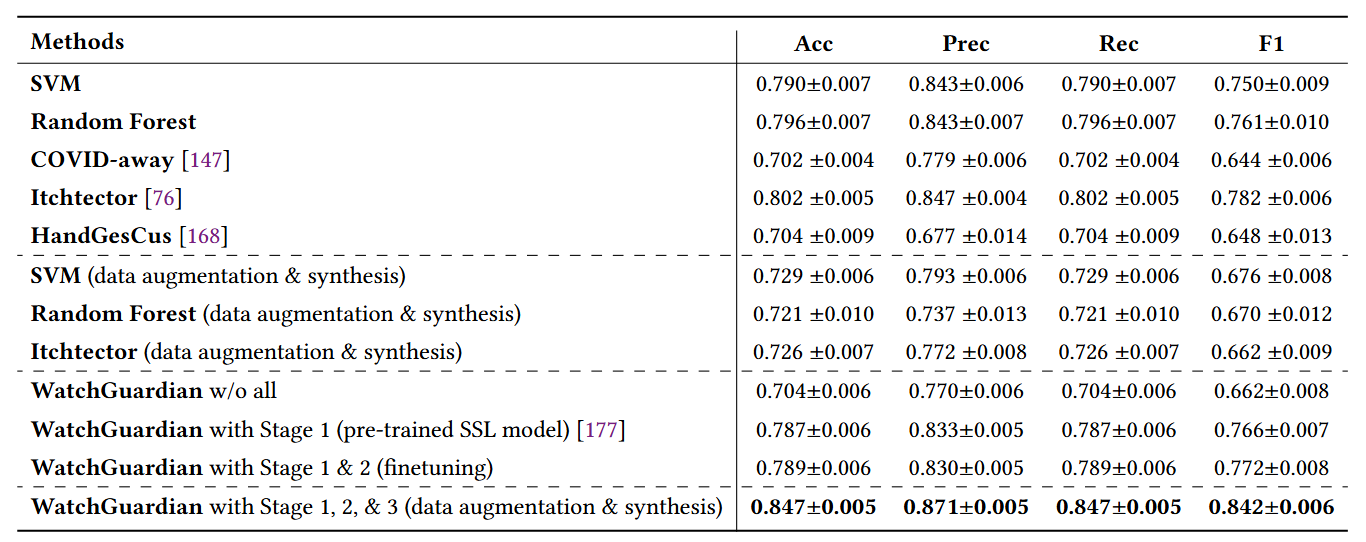

Action-Level Results of Comparison Study and Ablation Study. The same training (5 shots, one new target behavior) and testing data were used to ensure consistency.

Intervention Evaluation

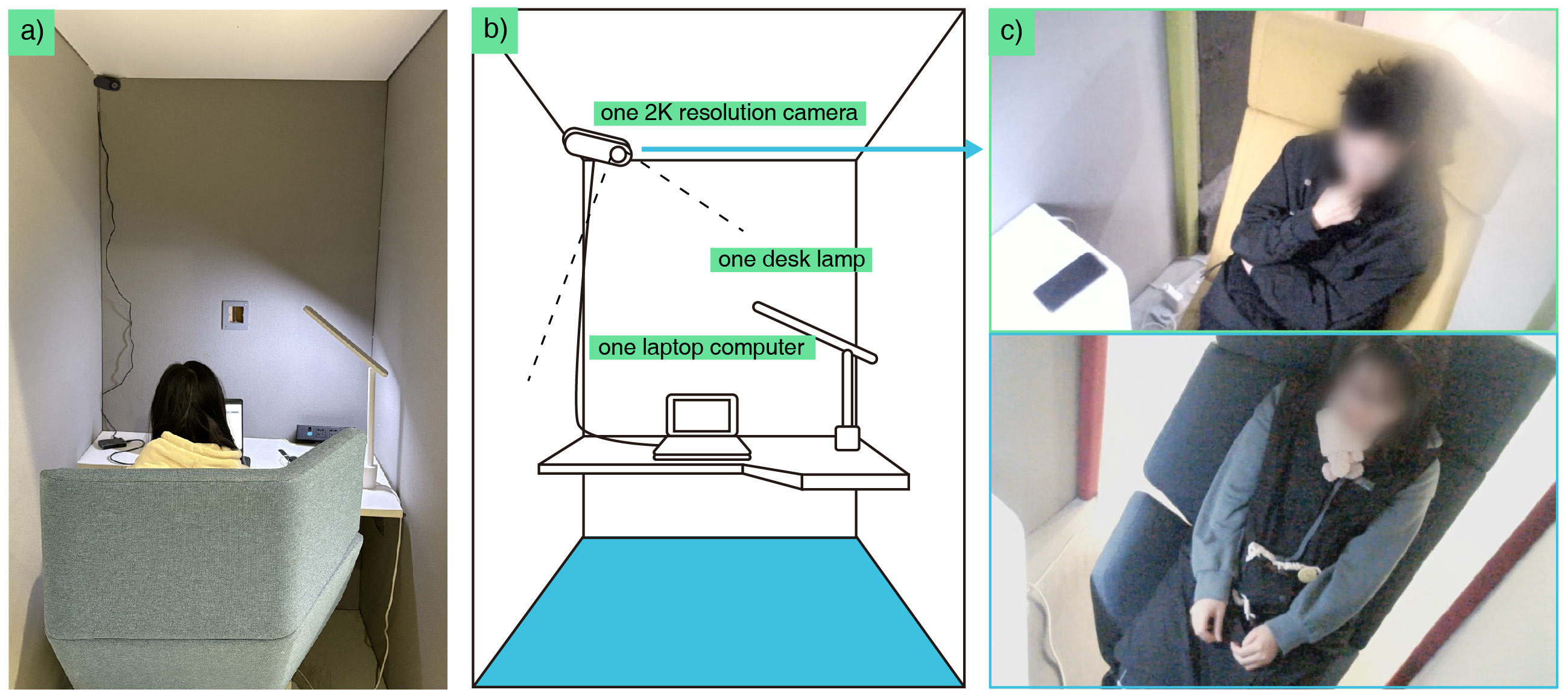

WatchGuardian Intervention Evaluation Setup. (a) The photo of a participant in the room. (b) The sketch of the study room and apparatus setup for intervention. (c) The video from the camera on the corner that records the ground truth.

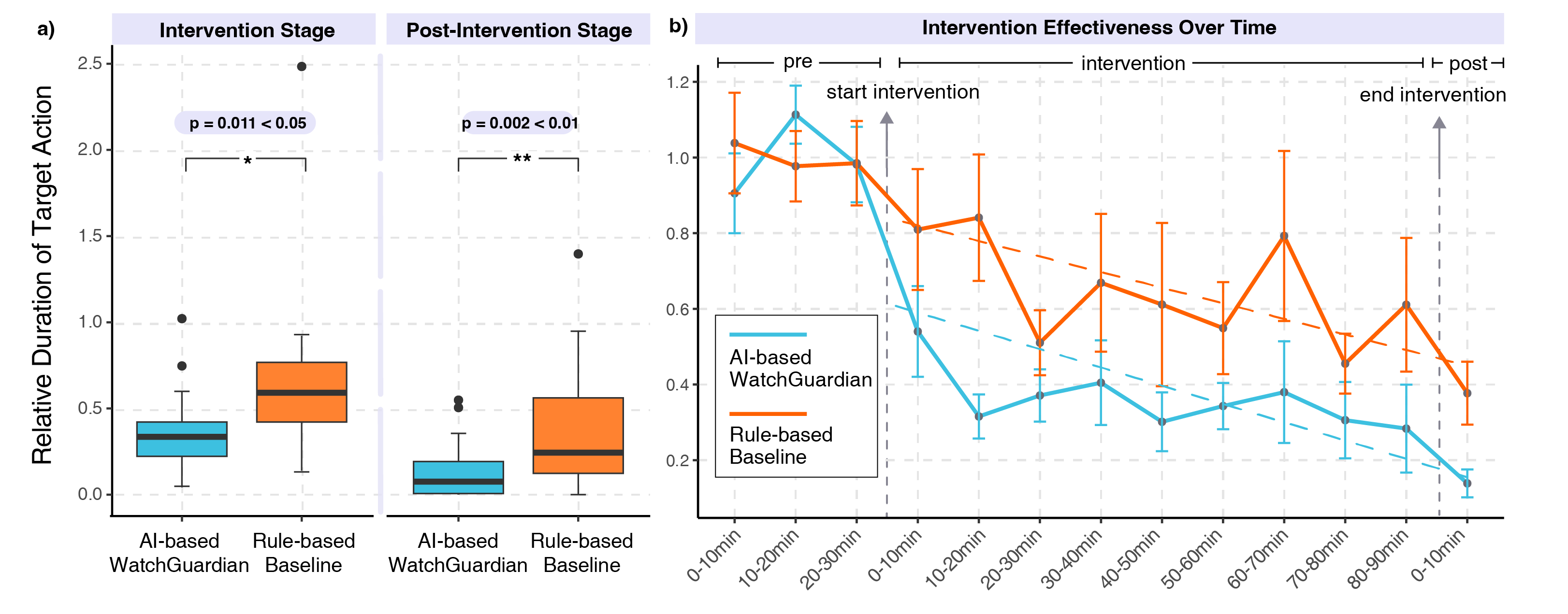

(a) Relative Duration of target action every 10 minutes in intervention and post-intervention stages (compared to the pre-intervention stage). A number lower than 1.0 means that an individual performed fewer target actions after intervention. (b) Average Relative Duration of target action over time. The dashed lines fit the last 10 minutes of the pre-intervention stage and the rest of the session.

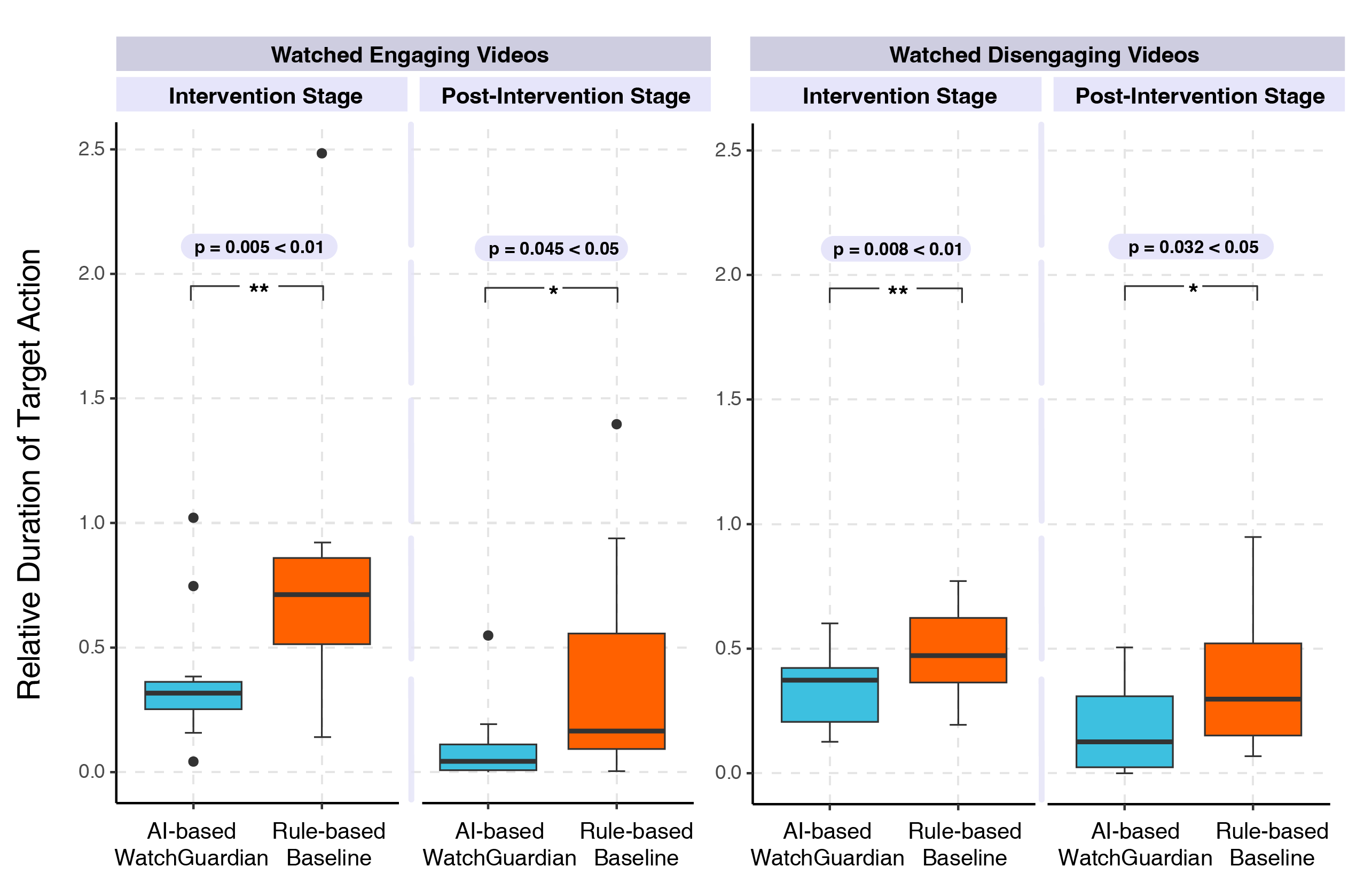

(a) Relative duration of target action for participants who watched engaging videos. (b) Relative duration of target action for participants who watched disengaging videos.

Video Demo

Full Video

Paper

BibTeX

@article{lei2025watchguardian,

title={Watchguardian: Enabling user-defined personalized just-in-time intervention on smartwatch},

author={Lei, Ying and Cao, Yancheng and Wang, Will Ke and Dong, Yuanzhe and Yin, Changchang and Cao, Weidan and Zhang, Ping and Yang, Jingzhe and Yao, Bingsheng and Peng, Yifan and others},

journal={ACM Transactions on Computing for Healthcare},

year={2025},

publisher={ACM New York, NY}

}